Web developers: your dev sites are a hack threat

As a web developer, one of the best practice methods when maintaining or updating a website is to create a development version – and not to work on the live site.

There are many reasons why this brings benefits. From design tweaks and testing of new functionality through to trying concept ideas without it affecting the live website.

It also means that you can better manage the client expectations and experience by showing them something within a real, working environment.

However, the biggest security risks always start with your own people. No, not DDOS or direct hacking of a live site.

The enemy is from within.

Us.

Or rather, our memory or poor practices.

What do I mean by that?

One of the most common areas of vulnerability is when a web developer forgets to maintain that development site by updating the framework with patches issued regularly by the likes of Magento or WordPress and the plug-ins used. Worse still, they forget that the development site is there, particularly when only sporadic work is done on that site.

All too often we adopt websites from other developers where the development site, usually located in a subdomain like dev.domain.com, has been hacked because it was using an out of date framework or plug-in and a vulnerability exploited.

It’s not usually noticeable at first glance but the impact of a hacked development site can be catastrophic for the live web domain and its reputation.

And unless specific server settings are in place, the hacker can then move sideways to the live website or in the worst case other domains on the same hosting server (this is typically done when the hacker gained access to the out of date development site and uploads something called a “bash script”).

As a developer it is vital to make sure that you maintain the update and plug-ins on your development sites, or completely delete the subdomain if you are not using it.

Additionally, we also see a lot of websites that we adopt from other developers where they have not specified to Googlebot to ignore the site (by adding a no-index instruction across the whole development site).

Around half of the sites we inherit from other developers have a development site live and indexable by Google.

As well as a security risk, this causes havoc with search optimisation – Google doesn’t like finding two versions of the same page on the internet, essentially thinking “I don’t know who to trust here, so I’m trusting no-one”. If the development site is hacked, even in a subdomain, and Google finds it, you’ll get your search results showing “This site may be hacked”, which will stop most people clicking through to your live site.

A small change makes a big difference

- Make sure that you update the dev framework and plug-ins on your development site at the same time as updating the live site. It’ll only take 10 minutes and could save you a day of grief.

- Completely delete the clone of the website on the subdomain if you are not using it.

Ensure there’s a no-index instruction in robots.txt (disallow user agent) for the development site so that it’s not crawled and indexed by the Googlebot. You do not want your dev site URLs getting indexed and searched by users! In most CMS frameworks, such as WordPress, you can simply tick a box to prevent the dev site being spidered.

- If using WordPress, make sure the ‘Discourage search engines from indexing this site’ box is ticked as soon as you uploaded WordPress to the development host space.

Robots.txt file example:

When placed in the web root:

# Block all robots from accessing a folder

User-agent: *

Disallow: /dev/

When placed in the development folder:

# Block all robots from accessing the current folder

User-agent: *

Disallow: /

.htaccess file example:

Place the .htaccess file in the root of your development folder. e.g.

/public_html/dev/.htaccess

not

/public_html/.htaccess

# Ideally block all access to the development site

# apart from you and your client

Order Deny,Allow

Deny from all

# The IP from witch you work

Allow from 12.34.56.78

# The IP of your client so they can view the dev site

Allow from 87.65.43.21

# Just block the Google and Bing bot

RewriteEngine On

RewriteCond %{HTTP_USER_AGENT} (googlebot|bingbot) [NC]

RewriteRule .* - [R=403,L]

Meta tags example:

Sometimes you might want to ask google not to index one page. Just place these headers into the html

#In the head of the code

meta name="robots" content="noindex"

meta name="googlebot" content="noindex"

A very simple change in the framework, CMS or the robots.txt/.htaccess file will prevent the Googlebot from indexing the dev site pages and avoid any URLs from that site being listed in Google and you having to spend ages in Google Webmaster Tools disavowing hundreds of URLs.

How do Aubergine do it?

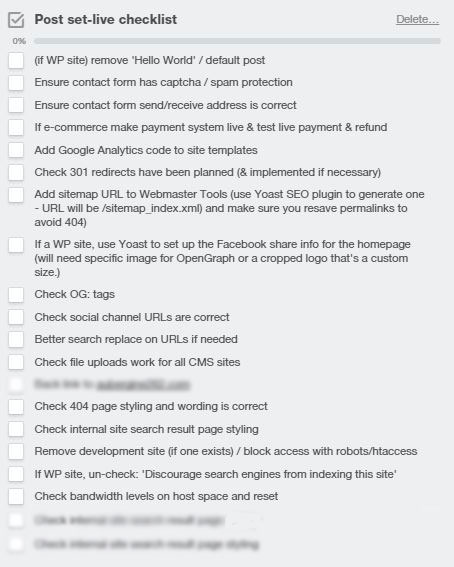

Any web development job we use has a go-live checklist, including essential tasks and settings to go through, and then it’s checked again by another developer in the team who hasn’t worked on the project.

Here’s our current go-live checklist:

If you would like to talk to one of our developers to discuss best practice go-live techniques, please feel free to call the studio on 01525 373020.